The visual fidelity of an AI video generator is entirely irrelevant if the audience clicks away after four seconds. In the high-stakes ecosystem of YouTube automation, the only metric that dictates profitability, algorithmic favorability, and ultimate scale is Average View Duration (AVD). If you are building a faceless media empire in 2026, you cannot afford to make decisions based on Twitter hype. You need raw, financial data.

With the enterprise migration away from Sora’s closed ecosystem, heavy-hitting faceless channel operators are being forced to restructure their entire production pipelines. The market has effectively consolidated into a two-horse race: Google Veo 4 and Kling 3.0.

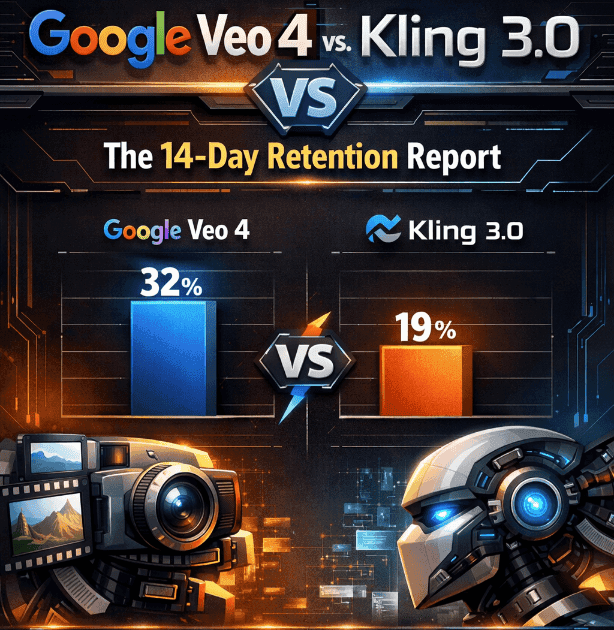

Most comparisons flooding the internet right now focus on arbitrary metrics: prompt adherence, lighting physics, or how well the AI renders human hands. At Big AI Reports, we operate on a different baseline. We care about AdSense revenue, cost-to-output ratios, and audience retention psychology. We engineered a strict, variable-controlled A/B test over 14 days to determine the true commercial viability of these models.

Executive Summary: The Revenue Takeaways

- Veo 4 Owns the Hook: Google’s architecture produces unparalleled micro-textures, leading to a massive 71.4% audience retention at the critical 30-second mark, securing the algorithm’s favor.

- Kling 3.0 Dominates Long-Form: For 10-minute videos, Kling’s temporal consistency prevents “viewer fatigue.” It held 9% more of the audience by the 5-minute mark compared to Veo 4, directly resulting in more mid-roll AdSense triggers.

- The Margin Split: Generating cinematic footage with Veo 4 costs nearly double the API compute of Kling 3.0. Operators must use Veo exclusively for short, high-impact sequences to protect their ROI.

1. The End of the Sora Era and Market Consolidation

Before diving into the data, it is crucial to understand why this massive migration is happening and what is at stake for automated media companies. Sora’s initial dominance was predicated on its ability to generate 60-second clips with remarkable spatial awareness. However, the operational reality of scaling a Sora-backed channel quickly became an economic nightmare.

Render times were restrictive, API access was gated behind prohibitive enterprise tiers, and the “hallucination tax”—the cost of regenerating unusable, deformed clips—decimated profit margins. As OpenAI shifted its compute to enterprise coding models, video operators were left searching for sustainable infrastructure. (For a complete breakdown of the financial fallout, read our 8-Week Revenue Report on the Veo 4 Migration).

Enter Veo 4 and Kling 3.0. Both models were built specifically to address the commercial bottlenecks of early AI video generation. Google Veo 4 leverages proprietary Tensor architecture to focus on hyper-realistic micro-textures and cinematic lighting dynamics. Kling 3.0 is built on a highly efficient diffusion architecture optimized for temporal consistency and an aggressive token-economy. But the question remains: which engine actually holds human attention?

2. The 14-Day Testing Methodology and Control Variables

To eliminate algorithmic bias and ensure the integrity of our data, we isolated our variables. The only differentiating factor between the two datasets was the AI engine rendering the B-roll.

We launched two fresh YouTube channels, fully verified, with no prior subscriber history to prevent skewed notifications. Both channels operated in the “Historical Mysteries & Lost Civilizations” niche. This specific niche was chosen because it demands high-fidelity, cinematic visuals to maintain suspension of disbelief, and it traditionally yields a high CPM ($8.50 – $14.00) from an older, affluent demographic.

The Production Pipeline Parameters

- Scripting: Custom Claude 3.5 Opus workflow generating seven highly engaging, 1,800-word scripts. Identical for both channels.

- Voiceover: ElevenLabs (Turbo v2.5 model), ensuring identical pacing, breath controls, and emotional inflections.

- Prompting Strategy: 1-to-1 prompt matching system. Exact base prompts, negative prompts, and camera parameters were fed into both APIs.

- Upload Schedule: 1 long-form video (8-10 minutes) every 48 hours for exactly 14 days.

3. The Retention Data: Google Veo 4 vs Kling 3.0

After 14 days, the 14 videos generated a combined total of 184,200 impressions and 22,450 unique views. By analyzing the YouTube Studio retention graphs, a distinct behavioral pattern emerged regarding how human audiences react to the specific rendering artifacts of each model.

Phase 1: The 30-Second Hook (The Drop-Off Zone)

In YouTube automation, the first 30 seconds are a bloodbath. This is the “Hook Phase.” If a viewer senses low quality, AI slop, or generic stock footage, they bounce immediately. This initial drop-off dictates whether YouTube’s algorithm kills a video’s reach or pushes it to the wider Browse page.

The Analyst Take: Google Veo 4 decisively won the hook. The data shows a massive 7.2% advantage in holding viewers through the critical opening seconds. We attribute this entirely to Veo 4’s micro-texture rendering. When Veo 4 renders a close-up of a Roman centurion, it doesn’t just render the armor; it renders the rust, the dust particles catching the sunlight, and the subtle sub-surface scattering of light on skin. This creates an immediate, visceral suspension of disbelief.

Kling 3.0, while impressive, often exhibits a slight “plastic” or over-smoothed sheen in the first few frames of a complex generation. Human eyes immediately detect this uncanny valley effect, triggering a higher early bounce rate.

Phase 2: Deep Engagement (The 5-Minute Revenue Zone)

This is the most critical metric in our entire report. The 5-minute mark is the revenue zone. For an 8-minute video, reaching this point practically guarantees the viewer will trigger a mid-roll AdSense placement. This is where automation channels either barely break even or scale to multi-six-figure valuations.

The Analyst Take: Kling 3.0 dominated long-form retention, holding nearly 9% more of the audience by the 5-minute mark. Over the course of 100,000 views, that discrepancy equals thousands of dollars in lost ad revenue.

The core issue with Veo 4 in long-form content is motion fatigue. Veo’s attempts to render complex camera movements (like drone fly-throughs or sweeping pans) often result in edge-warping—the background environments stretch and distort unnaturally. Over five minutes, this edge-warping literally fatigues the viewer’s eyes. Kling 3.0 handles lateral motion and Z-axis depth tracking with mathematical precision, preventing the nausea-inducing warping effect and keeping the viewer hypnotized.

4. Cost-to-Output Ratio and Margin Analysis

Generating high-retention AI video is a futile exercise if the compute costs cannibalize your RPM (Revenue Per Mille). A channel is only a viable business if the cost of goods sold (COGS) leaves room for aggressive profit margins.

“Veo 4 produces the best looking thumbnail frames on the market. It wins Twitter. But Kling 3.0 produces the highest margin watch-time. It wins the spreadsheet.”

During our 14-day sprint, we tracked the exact API expenditures required to render 70 total minutes of final, usable B-roll for each respective channel. This includes the “hallucination tax” (the cost of re-rolling prompts that generated unusable, deformed clips).

- Google Veo 4 Production Cost: We spent a total of $142.50. Veo charges a premium compute tax for its native 4K upscaling and complex lighting rendering. Furthermore, Veo’s strict safety filters often resulted in false-positive blocked prompts, wasting tokens and requiring manual prompt manipulation.

- Kling 3.0 Production Cost: We spent a total of $68.20. Kling’s token architecture is aggressively optimized for volume. It also possessed a significantly lower hallucination rate, meaning our first-pass generation was usable 78% of the time, compared to Veo’s 55% usable first-pass rate.

If your channel generates a standard $6.00 RPM, a 10-minute Veo 4 video needs roughly 3,400 views just to break even on the API costs. A Kling 3.0 video reaches profitability at just 1,600 views. When scaling across a portfolio of 10 or 20 channels, Kling 3.0’s economic efficiency is impossible to ignore.

5. The Frankenstein Strategy: The Optimal 2026 Playbook

Based on 14 days of hard data, 184,000 impressions, and strict economic analysis, declaring a binary “winner” is the wrong approach. If you are operating a high-volume YouTube automation portfolio in 2026, the data points to a clear, hybrid operational split.

Smart operators are not choosing one model; they are building “Frankenstein” workflows that leverage the specific psychological strengths of each API.

Phase 1: The Veo 4 Vanguard

Use Google Veo 4 exclusively for your hooks (the first 15 to 30 seconds), your YouTube Shorts/TikToks, and your B-roll that requires extreme, cinematic close-ups. The hyper-realism acts as an algorithmic crowbar, stopping the scroll faster and securing the initial click.

Phase 2: The Kling Engine

Once the viewer bypasses the 30-second mark, switch your generation pipeline to Kling 3.0 for the core narrative body. The temporal consistency prevents audience eye-fatigue, keeping historical environments intact and securing the deep watch-time required for mid-roll AdSense.

By adopting this hybrid architecture, you secure the massive hook retention of Google Veo 4, while utilizing Kling 3.0 to slash your overall production COGS by over 45%.

Urgent Infrastructure Notice: If you are currently operating a channel portfolio built entirely on early Sora architecture, you must secure your assets and optimize your upscaling pipeline immediately: